How to Evaluate AI Sales Agent Platforms: A Structured Framework

by Stella L

A structured five-stage evaluation framework for comparing AI sales agent platforms.

Most teams evaluate AI sales agent platforms the same way. They sit through four or five demos, compare feature lists, collect pricing quotes, and pick the vendor with the most impressive presentation. This approach is fast but unreliable. It evaluates vendors on their terms, and the vendor with the best demo team wins regardless of which platform actually fits your business.

The cost of a wrong decision here is significant. Implementation time, data migration, team workflow changes, and opportunity cost during ramp all compound. Switching platforms six months in because the original choice did not fit your operational reality is a setback most sales teams cannot afford.

A structured evaluation process takes more time upfront but produces a more defensible decision. This article provides a five-stage framework that starts with your specific business requirements and works outward through execution capability, intelligence depth, operational fit, and commercial validation. Each stage includes the specific questions to ask and the criteria that matter for making a clear comparison.

Stage 1: Define Your Business Requirements Profile

This is the most important stage in the evaluation process, and the one most teams skip entirely. Without a clear picture of your own requirements, every vendor conversation becomes a feature comparison with no anchor point.

Four variables need to be defined before you talk to any vendor.

Outbound model scope. How many target markets are you operating in or planning to enter? How many languages do you need outreach to cover? A single-region team evaluating platforms for North American outreach has fundamentally different requirements than a team selling into 10 or more markets across multiple languages. The scope of your outbound model determines which capabilities carry the most weight in your evaluation.

Team structure. What does your current sales team look like, and where does an AI agent fit? Consider whether you are augmenting an existing SDR team with AI support, replacing manual outbound processes entirely, or building outbound capability from scratch. Each scenario shifts which platform capabilities matter most. A team with experienced SDRs needs strong collaboration features and clear handoff workflows. A team replacing manual outbound entirely needs maximum automation depth and autonomous execution.

Pipeline expectations. Define your volume targets, meeting quality standards, and sales cycle benchmarks before you evaluate any platform. These numbers become your measurement baseline. Without them, you will end up accepting vendor-defined success metrics that may not match how your business actually evaluates performance.

Technical environment. Document your current CRM, sales tools, data sources, and any compliance requirements specific to your industry or markets. This inventory becomes your compatibility checklist during evaluation.

The output of this stage should be a weighted scorecard. Assign priority levels to each evaluation dimension in Stages 2 through 5: critical, important, or nice-to-have. A global team might weight multilingual capability as critical and mark other dimensions as important. A single-market team might weight automation depth and personalization quality as critical.

This scorecard becomes your consistent reference point across every vendor conversation. Without it, teams fall into a common trap: shifting evaluation criteria based on whichever vendor they spoke with most recently.

Stage 2: Evaluate Execution Capability

This stage examines how well the platform performs the core outbound workflow. Execution capability is where the most measurable differences between platforms appear.

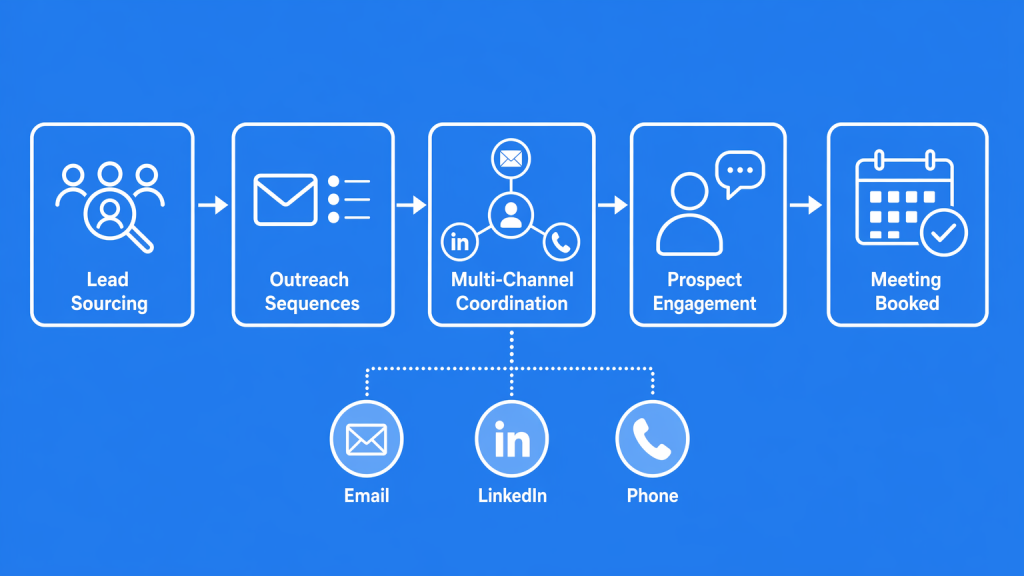

Automation depth. Map the full outbound workflow from lead sourcing through meeting booking. Identify exactly which steps the platform handles independently. The strongest platforms cover the entire chain with minimal manual intervention between stages. Others automate certain segments well but require human bridging between steps, such as manual lead list uploads, separate sequence configuration for each channel, or hand-managed scheduling workflows. Ask each vendor to walk through the complete workflow and specify precisely where their automation starts and stops. A clear workflow map is worth more than any feature list.

Multi-channel coordination. How does the platform orchestrate outreach across email, LinkedIn, phone, and other channels? The basic capability to send messages across multiple channels is widely available. The differentiator is coordination quality. Does the system run parallel sequences across channels with simple timing rules, or does it dynamically adjust channel selection and sequencing based on how each prospect actually engages? Ask for channel-specific delivery rates in your target regions, and ask how the system responds when a prospect is active on one channel and unresponsive on another.

Multilingual and multi-market readiness. For teams operating across multiple markets, this dimension often becomes the decisive differentiator. Two questions separate strong platforms from adequate ones. First, how many languages are validated with actual campaign performance data, as opposed to languages that are technically supported based on underlying model capability? Second, what level of cultural adaptation is built into the outreach logic beyond language translation? A platform that adjusts communication style, formality levels, and follow-up cadences based on market norms delivers meaningfully better results than one that translates the same sequence structure into different languages. Ask for sample output in your specific target languages and any available performance benchmarks from comparable markets.

Speed to value. What does the real timeline look like from contract signing to first meaningful campaign results? This varies more than most buyers expect. Some platforms deliver initial results within days because they bring substantial pre-built intelligence and require minimal customer-specific configuration. Others require weeks of setup, data preparation, and training cycles before producing useful output. Ask each vendor for a specific implementation timeline with milestones, and validate that timeline against references from customers with a similar scope to yours.

Stage 3: Evaluate Intelligence Depth

Execution capability tells you what the platform does. Intelligence depth tells you how well it does it and how quickly it improves.

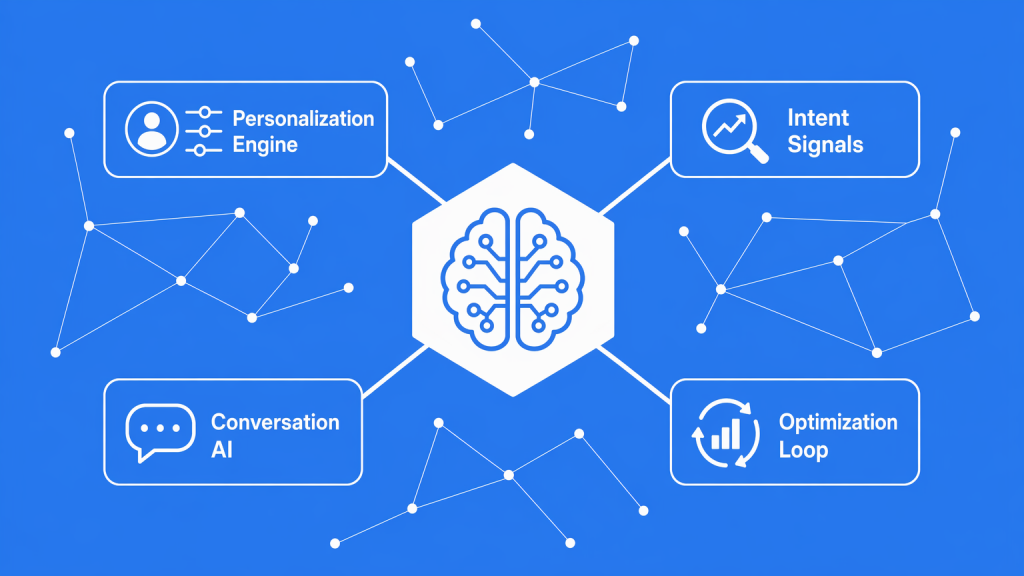

Personalization quality. Every platform claims personalization at scale. The meaningful variation is in what data sources feed the personalization engine and how the system uses that data. Some platforms pull from rich external sources, including company news, technology stack data, job postings, funding events, and industry trend data, and weave those signals into genuinely contextual messaging. Others rely primarily on basic firmographic data and produce personalization that reads like a slightly enhanced mail merge. The most reliable test is to provide a list of your actual target prospects and ask each vendor to generate sample outreach for those specific contacts. Curated demo samples always look good. Real-world output against your prospects reveals actual personalization depth.

Intent signal processing. How does the platform identify and act on buying signals? Evaluate the breadth of intent data sources the platform integrates with and how it combines multiple signals into timing decisions. Strong platforms synthesize signals across categories: hiring patterns, technology changes, funding events, content consumption, and competitive movements. Ask for measured conversion rate differences between intent-triggered outreach and baseline outreach. Vendors confident in their intent capabilities will have this data segmented by industry and market.

Conversation handling. This capability varies more across platforms than any other. When a prospect replies, what happens? Basic platforms classify responses as positive, negative, or neutral and route accordingly. Advanced platforms manage multi-turn conversations, handle common objections, answer product questions, and navigate scheduling discussions independently.

The range of scenarios a platform handles without human escalation directly impacts how much operational time your team spends managing the system. Ask for specific examples of conversation types the system manages independently. Ask what triggers escalation to a human team member.

Optimization speed. How quickly does the platform improve its own performance? All AI sales agents incorporate feedback loops, but the speed and depth of optimization varies. Evaluate what metrics the system optimizes against, how frequently optimization cycles run, and how much improvement is typically visible over the first three to six months. Ask for time-to-optimization benchmarks from implementations similar to yours.

Stage 4: Evaluate Operational Fit

A platform can score well on execution and intelligence and still fail in implementation if it does not fit your operational environment.

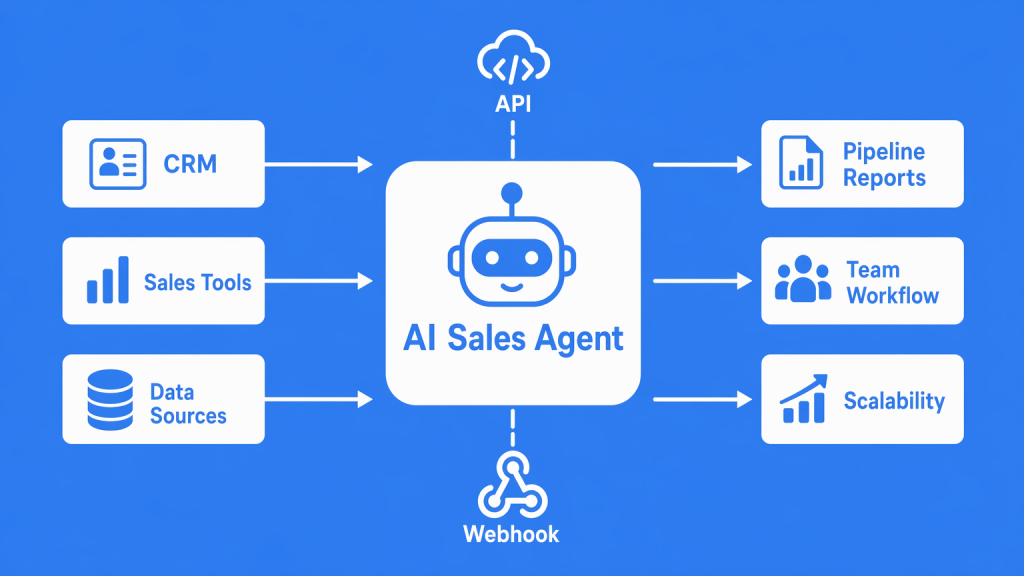

Team workflow integration. How does the platform fit into your team's daily work? Evaluate the interaction model: what does your team actually do on a daily, weekly, and monthly basis to work with the system? Platforms that require minimal ongoing operational management allow your team to focus on strategy and high-value prospect conversations. Platforms that require heavy daily oversight effectively add a new operational workload. Ask each vendor to describe a typical week for a customer team using their platform, and validate that description with customer references.

Data flow and system connectivity. Evaluate how information moves between the AI sales agent platform and your existing tools. The key question is whether prospect data, engagement history, and pipeline information flow smoothly into your sales workflow and reporting systems. Different platforms approach this differently. Some offer direct integrations with major CRM and sales tools. Others provide flexible data export, API access, or webhook-based connectivity that give your team control over how and when data syncs. The right approach depends on your technical environment and how your team currently manages data across tools. Ask each vendor to map the specific data flow for your tool stack and identify any manual steps required to keep your systems in sync.

Scalability. How does platform performance and cost change as you grow? A platform that works well for 5,000 prospects per month may behave differently at 50,000. Ask for specific examples from customers who have scaled their usage over 12 or more months, including any performance changes, cost increases, or operational adjustments that were required at higher volumes.

Stage 5: Validate Commercial Value

The final stage moves from capability evaluation to business case validation.

Pricing model transparency. Understand the full cost structure before making a decision. Per-seat, per-contact, per-action, and platform fee models each have different scaling implications. A per-contact model that looks affordable at your current volume may become expensive as you scale into new markets. Identify specifically where costs increase as your usage grows, and ask about any costs that are not included in the base platform price, such as data enrichment fees, additional language support, premium integrations, or onboarding services.

ROI validation method. How does the vendor measure and report ROI? This question matters because ROI calculations are only meaningful if the methodology matches how your business defines success. A vendor reporting impressive cost-per-meeting savings may be measuring against a baseline that does not reflect your current cost structure. Before accepting vendor ROI projections, define your own calculation methodology: what costs you include, what performance metrics you track, and over what timeframe you expect to evaluate results.

Customer evidence quality. Ask for case studies and references from companies that match your profile on three dimensions: industry, team size, and target market geography. A case study from a 500-person enterprise selling domestically provides limited predictive value for a 30-person team selling across 10 international markets. The strongest validation comes from references you can speak with directly who operate in an environment comparable to yours.

Contract structure. Evaluate commitment length, exit terms, and what happens to your data if you decide to switch platforms. Flexibility in contract structure often reflects vendor confidence in their product's retention rates. Ask what the typical renewal rate looks like and what the most common reasons are for customers who choose not to renew.

Bringing It Together: Using Your Weighted Scorecard

Return to the requirements profile you built in Stage 1. Your weighted priorities should now shape how you score each vendor across the four evaluation stages.

A straightforward scoring approach works best. Rate each vendor from 1 to 5 on each dimension, multiply by your priority weight, and compare total weighted scores. The framework is deliberately simple because the value is in the structured comparison process, not in scoring precision.

Two practical notes on using the scorecard effectively. First, complete the scorecard immediately after each vendor conversation while details are fresh. Retrospective scoring across multiple vendors introduces recency bias and makes the comparison less reliable.

Second, if two vendors score within 10 percent of each other, the scorecard has done its job by narrowing the field to your strongest options. The final decision between closely matched platforms often comes down to implementation experience, team confidence, and vendor responsiveness during the evaluation process. These are legitimate tiebreakers.

From Evaluation to Decision

A structured evaluation framework saves time and produces decisions that hold up under scrutiny. When your team can point to a documented process with defined criteria and weighted scores, the platform choice carries organizational credibility.

The framework also changes the dynamic of vendor conversations. When you arrive with defined requirements, weighted criteria, and specific questions for each evaluation dimension, the conversation shifts from a vendor-led pitch to a buyer-led assessment. You learn more, waste less time, and reach a decision faster.

With your evaluation framework established, the next step in building a complete picture is understanding the specific pricing models and cost structures across the AI sales agent market. Pricing complexity is one of the areas where surface-level comparison is most likely to produce surprises after signing.